What Is Human-in-the-Loop AI?

Human-in-the-loop AI is an artificial intelligence design approach in which humans actively participate in training, evaluating, or correcting machine learning systems to improve accuracy and reliability.

Defining Human-in-the-Loop AI

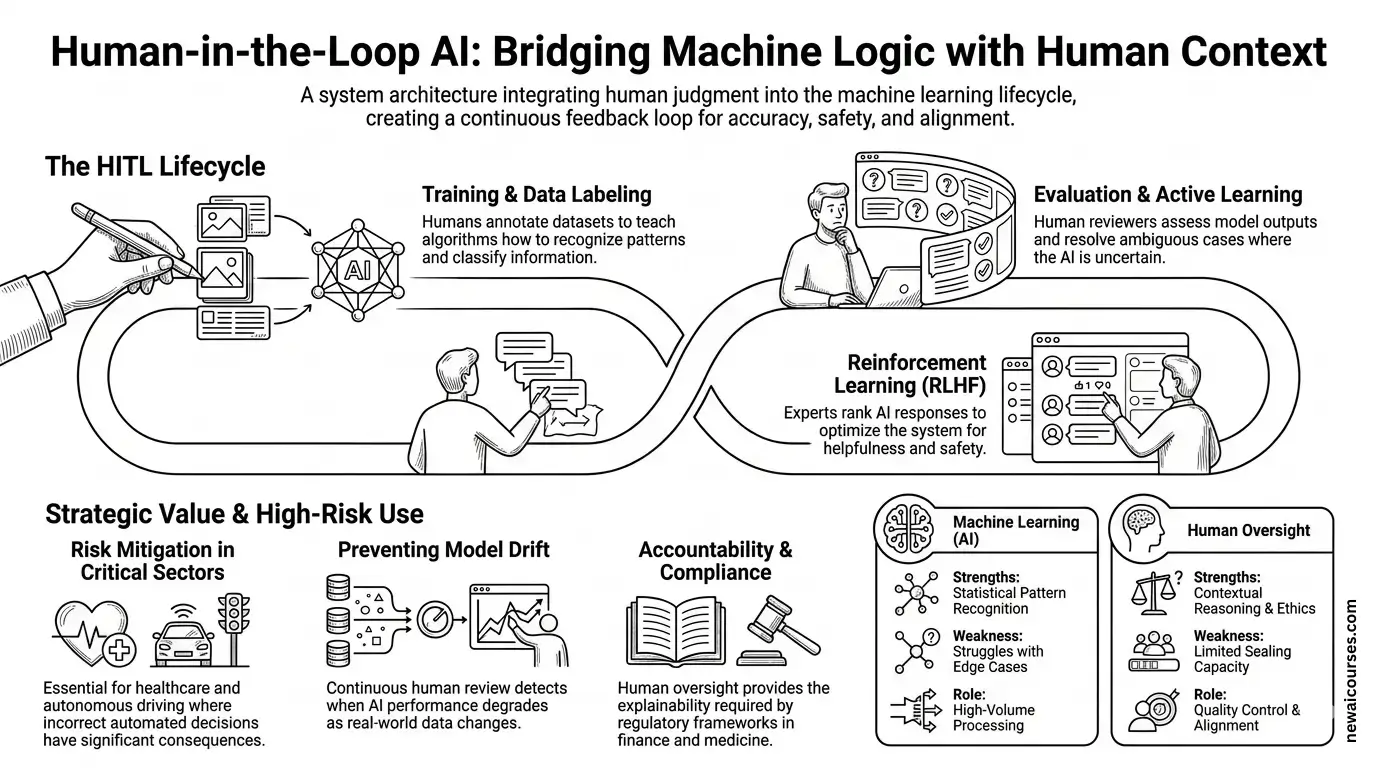

Human-in-the-loop (HITL) AI refers to a system architecture where human judgment is intentionally integrated into the lifecycle of an artificial intelligence model. Instead of operating entirely autonomously, the system relies on human participation during data labeling, model training, validation, or real-time decision review.

The concept is grounded in machine learning workflows where algorithms learn from datasets but still require human supervision to guide interpretation, resolve ambiguous cases, and correct errors. In practice, HITL systems create a feedback loop in which machine outputs are evaluated by humans, and the resulting corrections or annotations are incorporated into subsequent model updates.

Organizations such as Google, Microsoft, and Amazon integrate human oversight in large-scale AI systems to maintain quality control in tasks such as image recognition, content moderation, and natural language processing.

Origins of the Human-in-the-Loop Concept

The principle behind HITL systems is rooted in early machine learning research where supervised learning required labeled datasets. In supervised learning, algorithms learn patterns by analyzing examples that have already been classified by humans.

Research from organizations such as Stanford University and the MIT Computer Science and Artificial Intelligence Laboratory has highlighted that many machine learning models cannot achieve high performance without carefully labeled training data. Humans therefore play a crucial role in producing annotations that guide algorithmic learning.

The concept evolved further with the development of active learning techniques, in which algorithms identify uncertain predictions and request human input specifically for those cases. This targeted collaboration allows models to improve more efficiently while reducing the volume of human annotation required.

The Role of Human Oversight in Machine Learning Systems

Infographic of human-in-the-loop in artificial intelligence systems.

Human-in-the-loop architectures typically intervene at multiple stages of the machine learning lifecycle. During the training phase, humans label datasets used to teach algorithms how to recognize patterns. In the evaluation phase, human reviewers assess model outputs and identify incorrect predictions.

This oversight is particularly important when algorithms encounter edge cases or ambiguous data. Machine learning models operate through statistical pattern recognition, which means they can struggle when presented with unfamiliar inputs. Human reviewers provide contextual reasoning that machines cannot fully replicate.

Companies developing large-scale AI services frequently rely on dedicated annotation platforms to facilitate these interactions. For example, Scale AI provides data-labeling infrastructure used by organizations training computer vision and autonomous systems, while Appen coordinates global workforces that annotate training datasets for machine learning models.

Human Feedback in Modern AI Training

Human-in-the-loop methods have become especially prominent in the training of advanced language models. Reinforcement learning techniques often incorporate structured human feedback to refine model behavior after initial training.

One well-known example is reinforcement learning from human feedback (RLHF), a training method used by organizations such as OpenAI and Anthropic. In this process, human evaluators compare different outputs generated by a language model and rank them according to quality, accuracy, or helpfulness. These rankings are used to train a reward model that guides further optimization of the AI system.

This approach enables language models to align more closely with human expectations. Instead of optimizing solely for statistical likelihood of text sequences, the model learns to prioritize responses that humans judge as more useful or appropriate.

Human-in-the-Loop in High-Risk Applications

The integration of human oversight becomes especially important in domains where incorrect decisions could have significant consequences. In healthcare, financial systems, and autonomous technologies, purely automated decision-making can introduce risks that require human verification.

Medical imaging provides a clear example. AI systems trained to detect abnormalities in radiology scans may assist physicians by highlighting suspicious regions in an image. However, clinical decisions remain the responsibility of trained professionals who review the AI’s suggestions. Research published by organizations such as the National Institutes of Health emphasizes that AI diagnostic tools are most effective when used to augment rather than replace medical expertise.

Autonomous vehicle development also relies heavily on human oversight. Companies such as Tesla and Waymo incorporate human review of edge cases encountered by driving systems. Engineers analyze unusual scenarios encountered on the road and use those examples to refine perception and decision-making algorithms.

Advantages of the Human-in-the-Loop Approach

Human-in-the-loop AI provides several technical benefits that improve system performance and reliability. Human judgment allows machine learning models to handle ambiguous inputs, interpret context, and correct biases that arise from incomplete or unbalanced training data.

Human participation also helps detect model drift, a phenomenon in which an algorithm’s performance degrades over time as real-world data diverges from the training dataset. Continuous human review ensures that such changes are identified and addressed through retraining or dataset updates.

Another advantage is accountability. When artificial intelligence systems operate with human oversight, decisions can be reviewed and explained more easily. Regulatory frameworks in sectors such as finance and healthcare often require documented human supervision in automated systems, making HITL architectures a practical design strategy for compliance.

Challenges and Operational Constraints

Despite its advantages, the human-in-the-loop model introduces operational complexities. Human review processes can slow down decision-making, particularly in systems designed for high-volume or real-time processing. Balancing automation with human oversight therefore requires careful system design.

Scaling human supervision is another challenge. Large machine learning models may process millions of inputs, making it impractical for humans to review every output. Developers address this limitation by implementing selective review strategies in which human intervention occurs only when the model’s confidence level falls below a predefined threshold.

Cost considerations also play a role. Maintaining large annotation workforces or expert review teams can increase the operational expenses associated with AI development. Companies therefore combine automation with targeted human input to maintain efficiency.

Distinguishing HITL AI From Fully Autonomous AI

Human-in-the-loop systems differ fundamentally from fully autonomous AI systems that operate without ongoing human intervention. In autonomous systems, once the model is trained and deployed, it makes decisions independently based on learned patterns.

HITL architectures, by contrast, intentionally retain human involvement as part of the system’s operational design. Humans remain responsible for monitoring outputs, correcting errors, and providing new training signals when needed. This collaborative structure recognizes that while machine learning models excel at pattern recognition across large datasets, they still lack the contextual reasoning and ethical judgment that humans contribute.

The distinction is particularly relevant in regulatory and safety discussions. Many policymakers and technology organizations advocate for maintaining human oversight in critical AI applications to ensure transparency and accountability.

The Future Role of Human-in-the-Loop AI

Human-in-the-loop methodologies are expected to remain central to the development of reliable artificial intelligence systems. As models grow more complex and are deployed in increasingly sensitive environments, structured human feedback will continue to serve as a mechanism for maintaining quality, safety, and alignment with human values.

Advances in active learning, automated data selection, and hybrid human-machine workflows are already improving how these systems operate. By focusing human attention on the most difficult or uncertain cases, developers can combine the speed of automated algorithms with the contextual intelligence of human decision-makers.

The resulting collaboration between humans and machines reflects a pragmatic understanding of current AI capabilities. Rather than replacing human expertise, human-in-the-loop AI uses it as an integral component of the system, ensuring that artificial intelligence remains both technically effective and operationally accountable.

AI Informed Newsletter

Stay informed on the fastest growing technology.

Disclaimer: The content on this page and all pages are for informational purposes only. We use AI to develop and improve our content — we love to use the tools we promote.

Course creators can promote their courses with us and AI apps Founders can get featured mentions on our website, send us an email.

Simplify AI use for the masses, enable anyone to leverage artificial intelligence for problem solving, building products and services that improves lives, creates wealth and advances economies.

A small group of researchers, educators and builders across AI, finance, media, digital assets and general technology.

If we have a shot at making life better, we owe it to ourselves to take it. Artificial intelligence (AI) brings us closer to abundance in health and wealth and we're committed to playing a role in bringing the use of this technology to the masses.

We aim to promote the use of AI as much as we can. In addition to courses, we will publish free prompts, guides and news, with the help of AI in research and content optimization.

We use cookies and other software to monitor and understand our web traffic to provide relevant contents, protection and promotions. To learn how our ad partners use your data, send us an email.

© newvon | all rights reserved | sitemap