What Is Retrieval-Augmented Generation (RAG)?

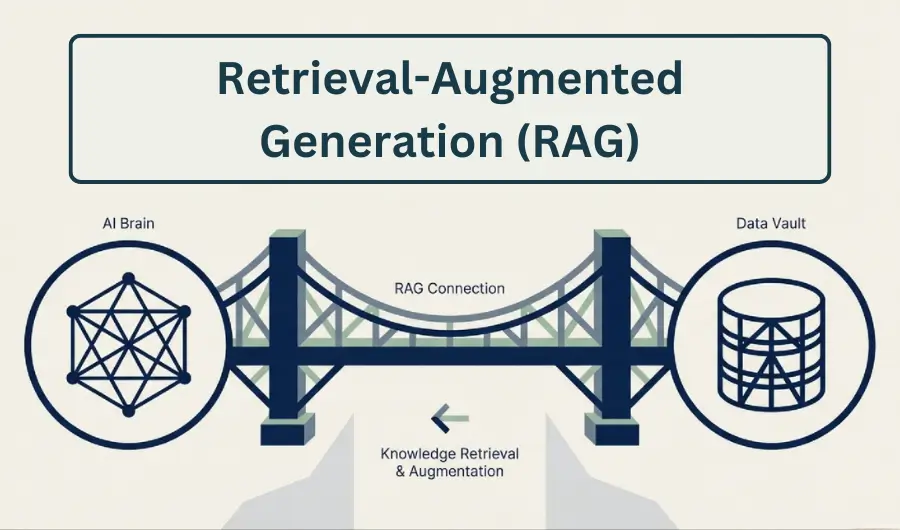

Retrieval-Augmented Generation (RAG) is an AI architecture that improves generative models by retrieving relevant external information before producing a response.

Defining Retrieval-Augmented Generation

Retrieval-Augmented Generation (RAG) is a method for enhancing large language model outputs by combining neural text generation with external information retrieval. The approach enables an AI model to consult external data sources during the generation process rather than relying solely on knowledge embedded in its training parameters.

The concept was formally introduced by researchers at Facebook AI Research in a 2020 paper titled Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks, led by Patrick Lewis. The research proposed integrating a retrieval mechanism with a generative language model so that the system could dynamically fetch relevant documents and use them to construct responses grounded in factual information.

Traditional large language models store knowledge within their neural network weights, learned during training. RAG systems extend this capability by allowing models to access external document collections at inference time, which reduces dependence on static training data and improves factual accuracy in knowledge-intensive tasks.

Why Retrieval Is Necessary for Generative AI

Generative AI models such as those based on transformer architectures learn patterns from large datasets during training. Once trained, these models generate outputs by predicting the next token in a sequence based on learned probability distributions. Although this enables powerful language generation, it also creates limitations.

First, the model’s knowledge is constrained by the data available during training and cannot automatically incorporate newly published information. Second, models may produce confident but incorrect statements when they lack sufficient information, a phenomenon widely described in AI research as hallucination.

RAG addresses these limitations by integrating a retrieval stage into the generation pipeline. Instead of relying entirely on memorized knowledge, the model first searches a database or document corpus to find relevant context. That context is then provided to the generative model, allowing it to produce responses grounded in retrieved evidence.

This architecture allows generative systems to remain flexible and current, since the underlying knowledge base can be updated independently of the model’s training process.

Core Architecture of RAG Systems

A typical Retrieval-Augmented Generation system contains three primary components: a retriever, a knowledge source, and a generative model.

The retriever is responsible for identifying relevant documents from a data repository. Modern RAG systems commonly use dense vector search techniques, where documents are encoded as embeddings and stored in a vector database. When a query is submitted, the system converts the query into an embedding and retrieves documents with the most similar vector representations.

The retrieved documents serve as contextual input to the generative model. These documents are appended to the user’s prompt or incorporated into the model’s context window so the model can reference them while generating a response.

The generative model then synthesizes a final answer using both the original query and the retrieved information. This architecture allows the model to perform reasoning and language generation while grounding its response in specific documents.

Retrieval Methods and Vector Databases

The effectiveness of a RAG system depends heavily on the quality of its retrieval process. Early information retrieval systems relied primarily on keyword matching methods such as TF-IDF or BM25. Modern RAG implementations increasingly use semantic search based on vector embeddings produced by neural encoders.

Vector databases store high-dimensional embeddings representing documents or passages. During retrieval, similarity search algorithms identify the closest vectors to the query embedding, enabling semantic matching even when queries and documents use different wording.

Several technology platforms have become widely associated with RAG deployments. For example, the vector database company Pinecone provides infrastructure for storing and querying embeddings at scale. Another widely used vector database, Weaviate, integrates semantic search capabilities with machine learning models to support knowledge retrieval in AI applications.

These systems enable RAG pipelines to operate efficiently even when retrieving information from large document repositories containing millions of records.

RAG in Production AI Systems

Retrieval-Augmented Generation has become a foundational technique in many modern AI applications, particularly those requiring accurate knowledge retrieval or domain-specific information.

For example, the framework LangChain provides tooling specifically designed to build RAG pipelines by connecting language models with external knowledge sources such as document databases and APIs. The platform enables developers to structure prompts that include retrieved context before passing them to a generative model.

Another widely used implementation environment is LlamaIndex, which focuses on indexing external data for large language models and enabling structured retrieval workflows. These frameworks allow organizations to integrate internal documentation, research archives, or customer support knowledge bases into AI systems without retraining the underlying language model.

Major AI platforms have also adopted retrieval techniques in enterprise AI products. For instance, the AI services provided by Microsoft integrate retrieval pipelines with language models to allow organizations to query proprietary data stored in corporate knowledge systems.

Advantages of Retrieval-Augmented Generation

The primary advantage of Retrieval-Augmented Generation is its ability to ground AI responses in verifiable information. By incorporating retrieved documents into the generation process, RAG systems can produce answers that reference specific sources rather than relying exclusively on probabilistic recall.

Another important benefit is knowledge freshness. Because the external database can be updated independently of the language model, a RAG system can incorporate new information immediately without requiring costly retraining procedures.

RAG also improves domain adaptability. Organizations can build specialized knowledge repositories containing technical manuals, legal documents, or internal policies. When the system retrieves information from these repositories, the generative model can produce responses tailored to a specific field or organization.

These characteristics make RAG particularly valuable in enterprise applications, research tools, and knowledge management systems where factual accuracy is critical.

Limitations and Technical Challenges

Despite its advantages, Retrieval-Augmented Generation introduces several technical challenges. The quality of generated responses depends strongly on retrieval accuracy. If the retriever fails to identify the most relevant documents, the generative model may produce incomplete or misleading answers.

Another challenge involves context limitations in large language models. Retrieved documents must fit within the model’s context window, which restricts how much information can be included during generation. Systems must therefore use ranking and filtering mechanisms to ensure only the most relevant passages are passed to the model.

Latency can also increase in RAG systems because each query requires a retrieval step before generation begins. Efficient indexing and optimized vector search infrastructure are therefore critical for production deployments that must handle large volumes of requests.

RAG Compared With Fine-Tuning

Retrieval-Augmented Generation is often compared with model fine-tuning as a strategy for incorporating domain knowledge into AI systems. Although both methods enhance model capabilities, they address different problems.

Fine-tuning modifies a model’s parameters by training it on additional data, which allows the model to internalize domain-specific patterns. However, updating a fine-tuned model requires retraining whenever the knowledge base changes.

RAG avoids this limitation by leaving the underlying language model unchanged while connecting it to an external knowledge repository. Because the data source can be updated independently, organizations can maintain continuously evolving knowledge systems without retraining the model itself.

For this reason, RAG is widely used in applications where information changes frequently or where organizations need to integrate large collections of proprietary documents.

The Role of RAG in Modern AI Systems

Retrieval-Augmented Generation has become a central architecture in contemporary generative AI because it bridges the gap between static model knowledge and dynamic real-world information. By combining information retrieval with neural language generation, RAG systems enable artificial intelligence models to access up-to-date knowledge and produce responses grounded in external sources.

As generative AI continues to expand into enterprise software, research platforms, and digital assistants, RAG architectures are increasingly used to connect language models with structured and unstructured knowledge repositories. This integration allows AI systems to function not only as language generators but also as interfaces to large bodies of information.

The architecture therefore represents a key development in the evolution of generative AI systems, enabling models to move beyond static training data and interact directly with external knowledge during the reasoning and response generation process.

AI Informed Newsletter

Stay informed on the fastest growing technology.

Disclaimer: The content on this page and all pages are for informational purposes only. We use AI to develop and improve our content — we love to use the tools we promote.

Course creators can promote their courses with us and AI apps Founders can get featured mentions on our website, send us an email.

Simplify AI use for the masses, enable anyone to leverage artificial intelligence for problem solving, building products and services that improves lives, creates wealth and advances economies.

A small group of researchers, educators and builders across AI, finance, media, digital assets and general technology.

If we have a shot at making life better, we owe it to ourselves to take it. Artificial intelligence (AI) brings us closer to abundance in health and wealth and we're committed to playing a role in bringing the use of this technology to the masses.

We aim to promote the use of AI as much as we can. In addition to courses, we will publish free prompts, guides and news, with the help of AI in research and content optimization.

We use cookies and other software to monitor and understand our web traffic to provide relevant contents, protection and promotions. To learn how our ad partners use your data, send us an email.

© newvon | all rights reserved | sitemap